Eleven v3 Audio Tags: Expressing emotional context in speech

- Category

- Resources

- Date

How we build AI systems that communicate in real time - covering the technical decisions behind turn-taking, latency, and expressive delivery, and the models we have shipped.

Interaction models are AI systems built to communicate with people in real time across audio, video, and text without the turn-based delays that define many chatbots today.

We have been building toward this category for years. This post lays out what we have shipped, and the research and product decisions behind it.

Amanda, an ElevenAgents voice agent, handles an inbound emergency loan call - navigating a distressed customer from panic to resolution in under two minutes.

Three things have to hold together for an interaction system to work well and create engaging natural interactions:

*Refers to model inference time only. Actual end-to-end latency will vary with factors such as your location and endpoint type used.

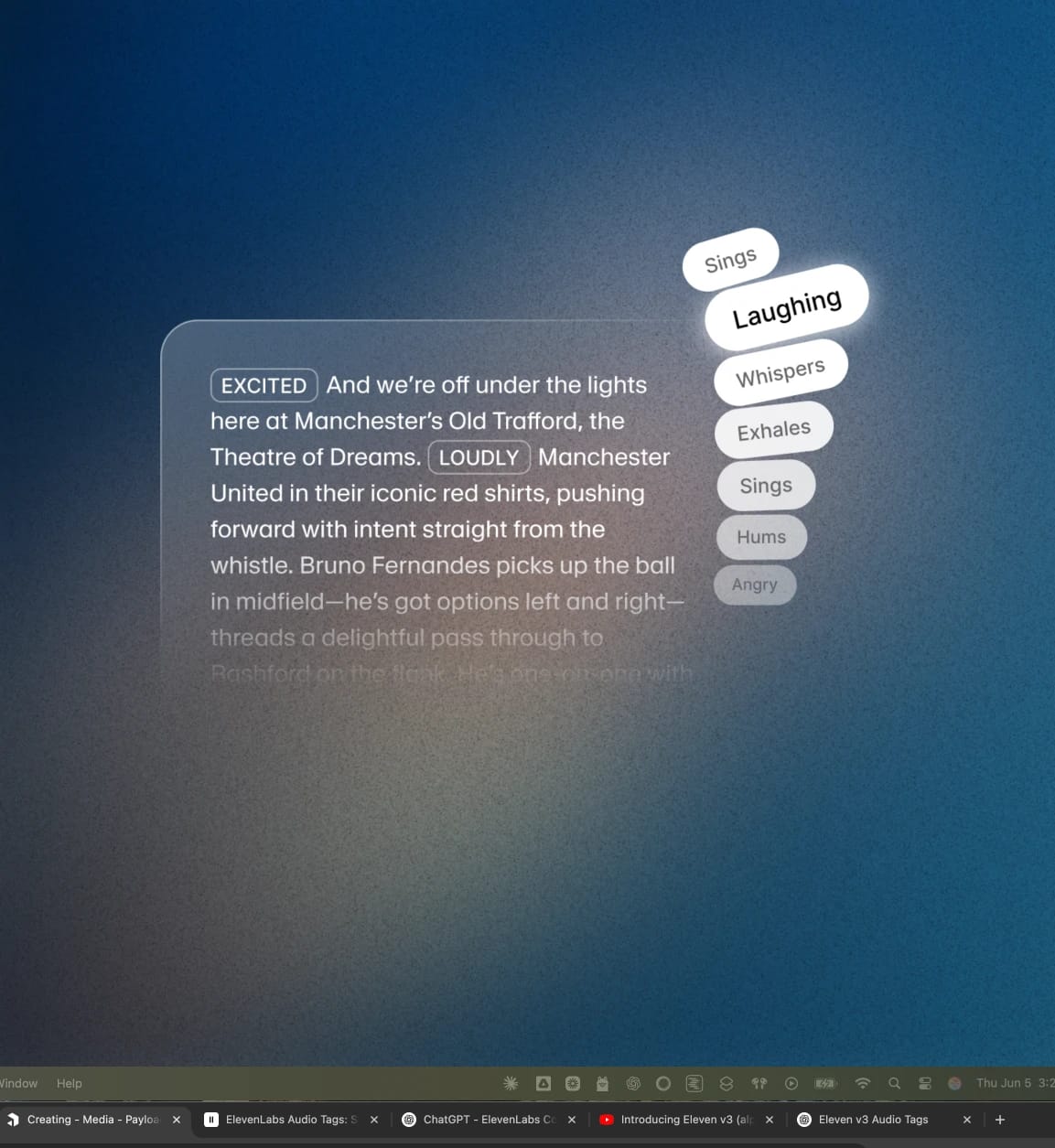

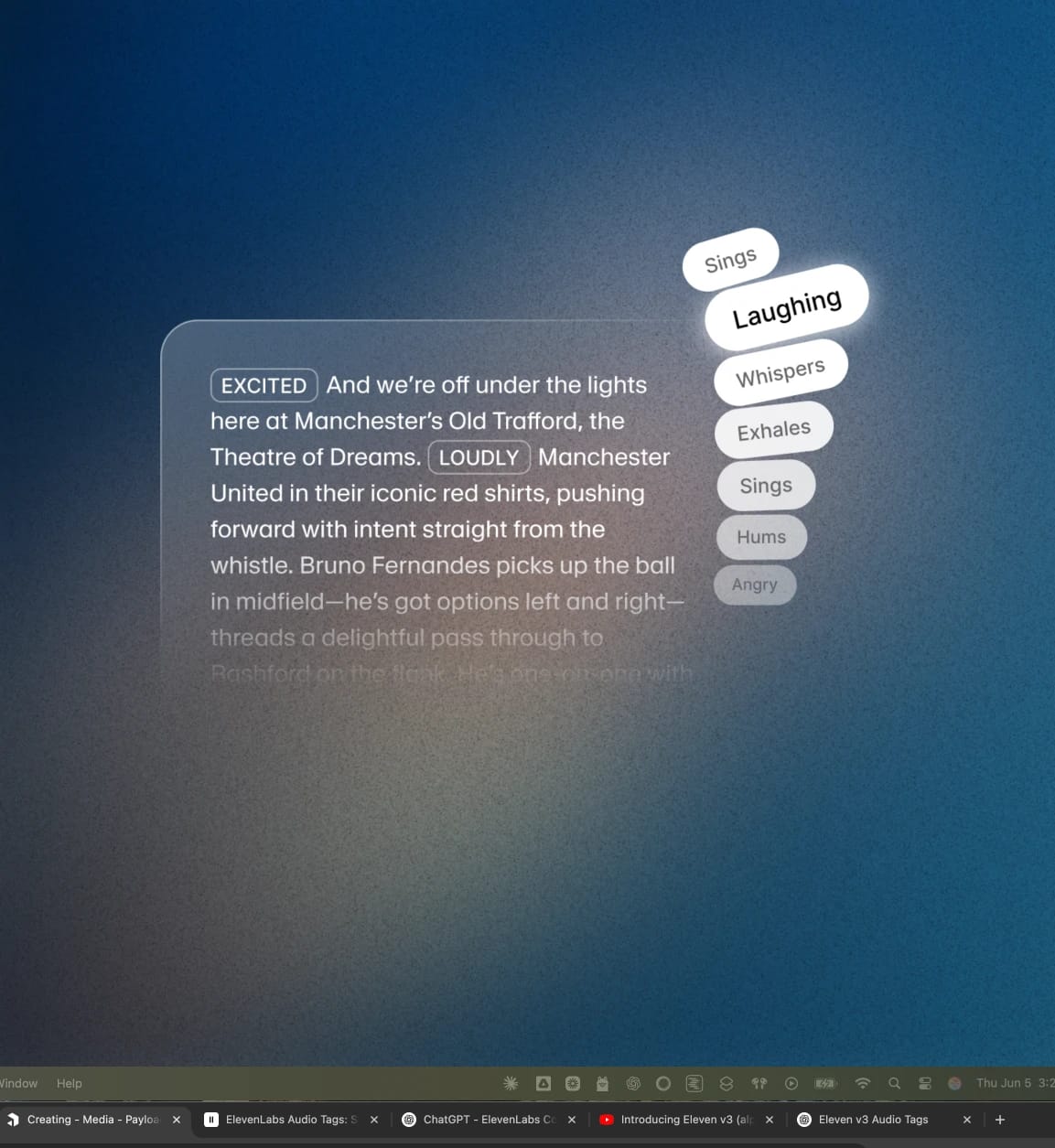

Eleven v3 - our most expressive Text to Speech model. Designed to shift register, laugh, and sigh with natural delivery.

Eleven v3 Conversational. Our conversational variant of v3, launched inside ElevenAgents in February 2026 with built-in turn-taking. The turn-taking model is enabled by default when v3 Conversational is selected as the TTS model.

Speculative turn-taking. A separate feature in v3 Conversational that pre-triggers LLM response generation during periods of user silence, reducing perceived latency.

Flash v2.5. Our fastest Text-to-Speech model, designed for low-latency real-time use, at ~75ms inference.*

Scribe v2. Our Speech-to-Text model with industry-leading accuracy.

ElevenAgents Expressive Mode. Lets agents use expressive tags such as [laughs], [whispers], [sighs], and [slow] to control delivery in context.

*Refers to model inference time only. Actual end-to-end latency will vary with factors such as your location and endpoint type used.