Brilliant launches personal tutor powered by ElevenLabs

- Category

- ElevenAgents Stories

- Date

Trusted by 1M+ users • Free to start

Narration

Expressive voices that bring audiobooks and podcasts to life.

Advertisement

Persuasive voices that drive action and brand recall.

Characters

Playful and engaging voices for cartoons or video games.

Narration

Expressive voices that bring audiobooks and podcasts to life.

Conversational

Natural voices perfect for informal scenarios.

Social Media

Trendy, attention-grabbing voices for short-form content.

Our voice AI responds to emotional cues in text and adapts its delivery to suit both the immediate content and the wider context. This lets our AI voices achieve high emotional range and avoid making logical errors when your content is read aloud.

Create controllable, expressive speech layered with emotion, audio events, and immersive soundscapes.

The voice paused for a moment, [softly] as if gathering its thoughts before continuing. Every breath felt intentional, every hesitation perfectly timed.

This wasn't synthetic speech anymore [laughs warmly] - it was a voice that understood timing, emotion, and the space between words.

Text transformed into presence. [sighs contentedly] Words given life, personality, soul.

Explore an ever-growing collection of expressive, lifelike voices for any use case - from narration to character creation.

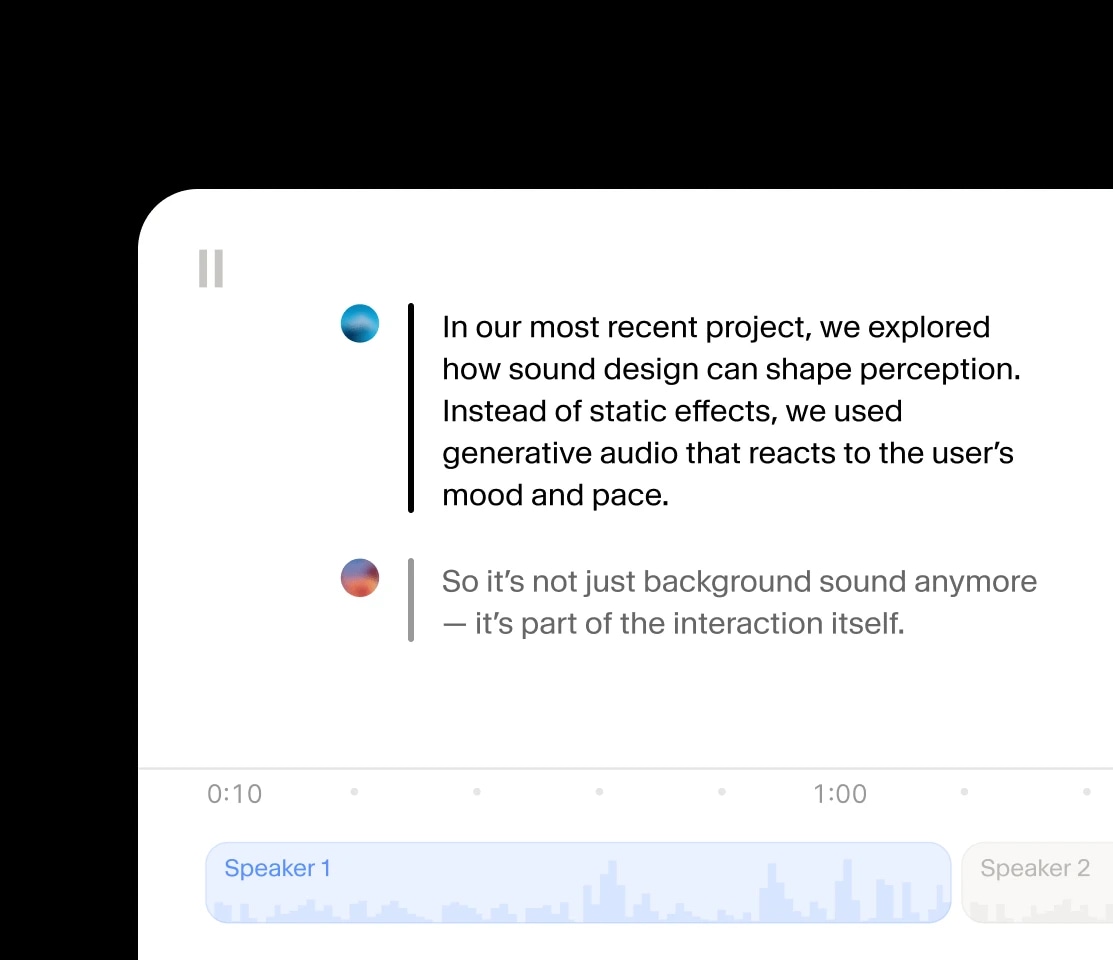

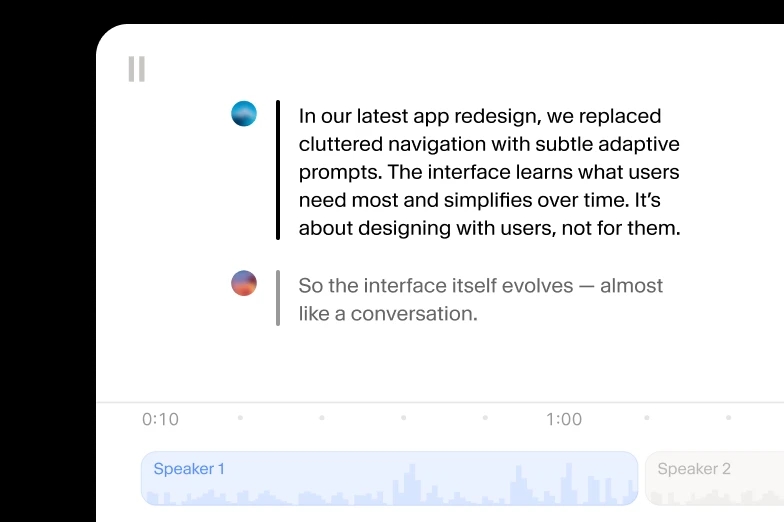

Create audio conversations where speakers share context and emotion.

Instantly replicate your own voice or craft unique AI Voices with full control.

Bring stories to life in over 70 languages, all with native-level emotion and clarity.

Our most advanced, expressive model with audio tags for precise emotional control. Best for storytelling, gaming and media production in 70+ languages.

Our most lifelike, emotionally rich text to speech model supporting 29 languages. Best for voiceovers, audiobooks, post-production and content creation.

Our high quality, low latency TTS model in 32 languages. Best for developer use cases where speed matters and you need non-English languages

High quality, low-latency model with a good balance of quality and speed

The best AI audio models in one powerful editor.

Generate expressive audio in seconds using our iOS and Android apps.

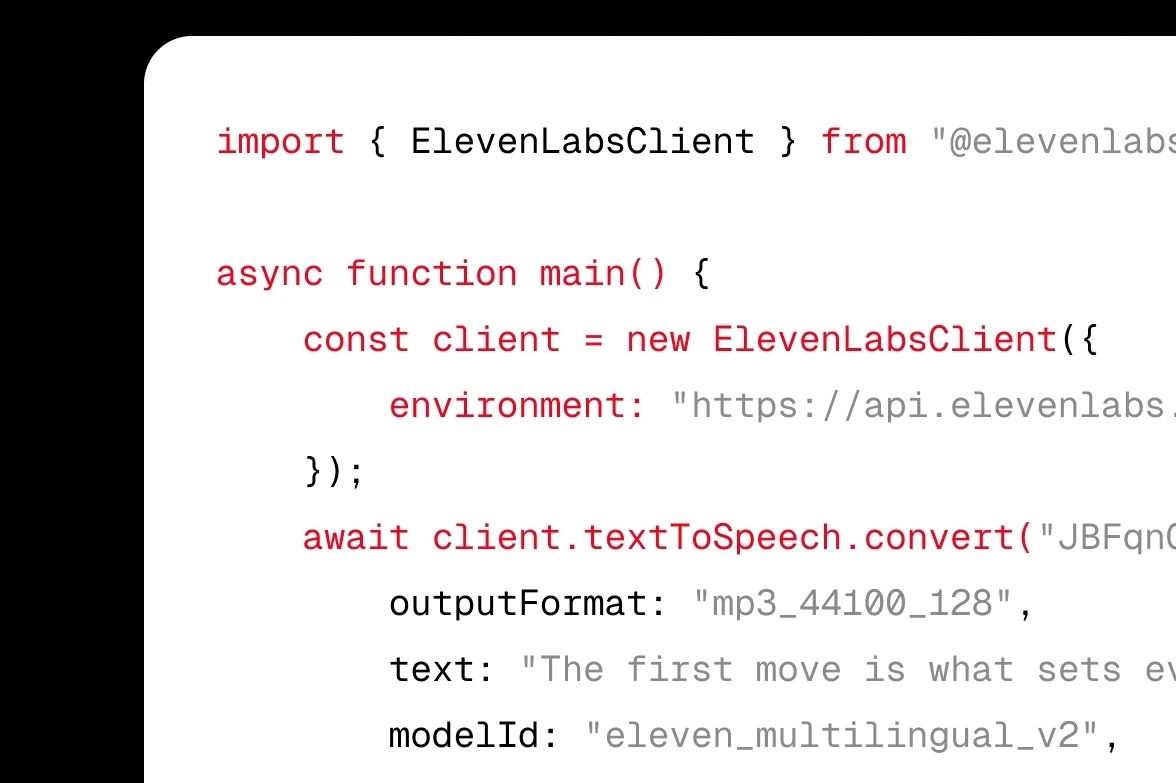

Integrate ElevenLabs Text to Speech (TTS) into your product via APIs or SDKs.

Yes, ElevenLabs offers two ways to create a custom voice:

Instant Voice Cloning lets you create a digital version of any voice from a short audio sample (around 1 minute). It's fast, available on paid plans, and ideal for getting started quickly.

Professional Voice Cloning uses 30+ minutes of high-quality recorded audio to build a highly realistic clone that captures the accent, emotional range, and vocal traits of the original speaker.

Both options are designed with safety in mind. You must have permission to clone any voice, and we use AI Speech Classifier technology to detect cloned audio. Once created, your voice can be used across Text to Speech, Studio, Dubbing, and the API in 32+ languages.

ElevenLabs gives you access to over 11,000 voices, including:

• Hundreds of premade voices spanning different ages, accents, tones, and styles.

• Thousands of community-shared voices in the Voice Library, searchable by language, gender, accent, and use case.

• Iconic voices from television and film for read-aloud and narration.

If you can't find the perfect match, you can also:

• Use Voice Design to generate a brand-new AI voice from a text prompt describing how it should sound.

• Use Voice Cloning to create a digital version of your own voice (with permission).

This is one of the largest voice libraries available in an AI text to speech platform.

The ElevenLabs free plan includes 10,000 characters per month, which is enough to generate roughly 10 minutes of audio. You also get access to:

• The full Text to Speech generator with premade voices.

• Voice Cloning (Instant Voice Cloning on paid plans).

• The Text to Speech API for developers.

• Generation in 32+ languages.

Paid plans start at a low monthly cost and unlock more characters, faster generation, Professional Voice Cloning, commercial use rights, and higher concurrency for production workloads.

Yes. Paid ElevenLabs plans include full commercial usage rights for the audio you generate, meaning you can use it in YouTube videos, podcasts, advertisements, audiobooks, films, games, and apps without paying additional royalties.

The free plan is intended for personal, non-commercial use and requires attribution to ElevenLabs. If you need to monetise your content or use audio in client work, upgrading to a paid plan unlocks full commercial usage rights.*

*Commercial rights are subject to our Terms of Use and Prohibited Use Policy.

ElevenLabs offers several Text to Speech models, each tuned for a different use case:

• Eleven v3 - Our most expressive and emotionally rich model, with support for inline audio tags like [whispers], [laughs], and [excited]. Best for long-form content, audiobooks, film, and dramatic voiceovers.

• Multilingual v2 - The most stable and lifelike model for high-quality content production across 29 languages. Best for narration and post-production.

• Flash v2.5 - Ultra-low-latency model (sub-500ms end-to-end) supporting 32 languages. Best for real-time conversational AI, agents, and live applications.

• Turbo v2.5 - A balance of quality and speed, suited for high-throughput use cases that still need natural delivery.

Most users start with Multilingual v2 for content and switch to Flash for anything real-time.

Yes. ElevenLabs Flash v2.5 delivers sub-500ms end-to-end latency, making it one of the fastest production-ready text to speech models available. The Text to Speech API supports audio streaming, so you can start playing speech to your users while the rest of the response is still being generated.

This makes ElevenLabs ideal for:

• Conversational AI and voice agents that need natural-feeling response times.

• Live customer support, telephony, and IVR systems.

• Real-time gaming NPCs and interactive experiences.

• Voice-enabled apps where every millisecond matters.

For full conversational use cases, ElevenAgents combines Text to Speech, Speech to Text, and an LLM into a single low-latency voice agent platform.

ElevenLabs Text to Speech supports a wide range of output formats so you can plug audio into any workflow:

• MP3 - Standard format for podcasts, YouTube, and general listening.

• WAV / PCM - Uncompressed audio for studio work, dubbing, and post-production.

• µ-law - Optimised for telephony and call-centre integrations.

You can also choose your sample rate and bitrate via the API to balance quality and bandwidth for your specific use case.

ElevenLabs takes data security seriously and is trusted by leading enterprise customers. Our compliance posture includes:

• SOC 2 Type II certified.

• ISO 27001 certified.

• PCI DSS Level 1 certified.

• GDPR compliant.

• HIPAA-eligible workflows for healthcare.

Your text input is not used to train our models without your consent. Enterprise customers can enable Zero Retention Mode for eligible services.*

Voice clones are protected by AI Speech Classifier technology that can detect AI-generated audio.

For ZRM-eligible services, where ZRM is correctly enabled, certain types of data are not retained. See documentation for details.

Yes. ElevenLabs gives you several ways to fine-tune how your text is spoken:

• Audio tags (Eleven v3) - Use inline tags like [whispers], [laughs], [excited], or [sighs] to direct delivery and emotion.

• Voice settings - Adjust stability, similarity, and style to control how expressive or consistent the voice sounds.

• Pronunciation dictionaries - Define exactly how brand names, technical terms, or unusual words should be spoken.

• SSML support - Use Speech Synthesis Markup Language tags for precise control over pauses, emphasis, and phonemes via the API.

These controls let you go from raw text to studio-quality narration without re-recording.

Yes, many learners use ElevenLabs as an AI pronunciation coach. Because our voices sound like real native speakers across 32+ languages and dozens of regional accents, you can:

• Hear how any word, phrase, or full passage sounds in another language.

• Compare British, American, Australian, Indian, and other English accents.

• Practice listening comprehension with longer passages of natural speech.

• Generate audio for vocabulary lists, dialogues, and reading exercises.

The free plan gives you 10,000 characters per month, enough for daily practice sessions, and ElevenReader lets you import articles and books to listen to on the go.

ElevenLabs voice AI combines proprietary methods for context awareness and high compression to deliver ultra-realistic, high-quality speech across a range of emotions.

Our contextual text to speech model is built to understand the relationships between words and adjusts delivery accordingly. It also has no hardcoded features, meaning it can dynamically predict thousands of voice characteristics.

What sets ElevenLabs apart from other TTS providers:

• Over 11,000 voices in the Voice Library, plus Voice Design and Voice Cloning.

• Low-latency generation (~75ms model inference*) with Flash v2.5, ideal for real-time agents and apps.

• Support for 32+ languages with native-quality accents.

• Eleven v3 model with audio tags for emotion, laughter, whispering, and more.

• Trusted by 100,000+ developers and leading enterprise customers.

Refers to model inference time only. Actual end-to-end latency will vary with factors such as your location and endpoint type used.

Yes. ElevenLabs supports text to speech in 32+ languages across our model lineup, with high-quality native accents in each.

Multilingual v2 supports 29 languages for the highest-quality long-form content. Flash v2.5 supports 32 languages with low-latency generation for real-time applications. Eleven v3 (alpha) also supports a broad set of languages with the most expressive, emotional delivery.

Languages include English, Spanish, French, German, Italian, Portuguese, Polish, Hindi, Japanese, Chinese, Korean, Arabic, Russian, Dutch, Turkish, Swedish, Indonesian, Filipino, Ukrainian, Greek, Czech, Finnish, Romanian, Danish, Bulgarian, Malay, Slovak, Croatian, Tamil, Norwegian, Hungarian, and Vietnamese.

ElevenLabs Text to Speech is free to start. The free plan includes 10,000 characters per month (around 10 minutes of audio), access to premade voices, and the API.

Paid plans start at a low monthly price and unlock:

• More characters per month (up to millions on higher tiers).

• Commercial usage rights for monetised content.

• Professional Voice Cloning for hyper-realistic custom voices.

• Higher concurrency and faster generation for production use.

• Priority access to new models like Eleven v3.

Enterprise plans add SSO, custom contracts, dedicated support, and Zero Retention Mode for eligible services.

.webp&w=3840&q=80)

.webp&w=3840&q=80)

.webp&w=3840&q=80)

.webp&w=3840&q=80)